Standard

WEBDAV Test Suite

Documentation

Abstract

This document describes a general and extensible, open source based, WebDAV server testing environment. The testing environment consists of a test suite, containing a series of XML test cases, and the necessary Java programs to execute the test suite and display the results.

Each test case is mainly made up of WebDAV commands and is expressed in XML. The syntax of the tests is fully described within this document.

Currently, the test suite allows for the testing of the standard behavior of a WebDAV class 2 server. The test suite covers the areas of functional, performance and multi-user behavior. Each test suite execution generates an XML protocol report file to allow for error checking and evaluation.

The ‘Ant’ tool controls the test suite and is able to create a deployable, ready to use, zipped file. Further test suite extensions will cover the ACL, Delta-V and DASL standards. This testing environment could be the basis of automated interoperability tests and standard conformance.

This testing environment was originally developed by Software AG and is now donated to the Jakarta-Slide project.

Contents

Structure of Test With Repeater

Diagram of Specification Element

Test Cases

XML Files

General Description

The WebDAV Test Suite consists of a series of test cases distributed within a directory tree.

All test cases are written as well-formed and valid XML documents, they all conform to a specified DTD. An explanation of well formed and valid can be found here: http://www.xml.com/pub/a/98/10/guide3.html.

Each test case consists of several steps, with each step containing one WebDAV command. The XML files are passed into a T-Processor (Explained fully later). The T-Processor program picks up the WebDAV requests from the XML document and sends them to the server. The server will carry out this request and return a response. This is picked up by T-Processor and compared against the expected response defined in the XML document. A rough example of an XML test case is shown further down.

Architecture

![]()

Example Test Program

test.xml

<test>

<request>

<command> Put

/location/document.html </command> <!-- A put command is

sent to the server to put a document at this location. T-Processor sends this

request to the server. -->

</request>

<response>

<command> 200 OK </command>

<!-- The expected response is

declared here. T-Processor will check the response code from the server against

this to see if they match. If they do, it means the test has been successful.

-->

</response>

</test>

NOTE: This example does NOT accurately show the syntax required and is a simplified version for ease of explanation.

The test above will cause the T-Processor to issue a put command to the specified location. The expected response is shown in this test case as being a “200 OK” Response Code.

To summarize; T-Processor passes the requests outlined in the XML document to the server, the servers response is checked by T-Processor against the expected response given in the XML test case. If these match, the T-Processor will pass the test as successful.

DTD

All the test cases require a DTD specified. The DTD describes how the test case should be structured.

For example, it declares that within a ‘request’ element, there must be a ‘command’, and that there may be ‘header’s and a ‘body’. If either of these does not exist within a request element, TProcessor will throw an error.

All test cases have a declaration at the start giving the location of the DTD that TProcessor will use to ensure that the structure of the test case is correct. A test will not run without this declaration.

Structure of a Test

Request For Example, a

Put command

A test is made up of many step elements.

Within each of these step elements there is a request and a response defined.

As an XML document the layout is as follows:

<test> <step> <request> </request> <response> </response> </step> </test>

Control Statements

The XML test cases may also contain control statements. These are ‘Repeat’ and ‘Thread’.

The repeat control allows a step to be executed n times. The thread control allows a step to be threaded to the server. This simulates multiple users.

Request

EG. Put

Structure of Test With Repeater

|

Request EG. Put |

As an XML document the layout is as follows:

<test> <repeater

repeatCount="n”> <step>

<!--

Step will iterate n times --> <request> </request> <response> </response> </step> </repeater> </test>

Structure of Test With Thread

As an XML document the layout is as follows:

<test> <thread> <repeater

repeatCount="n”> <!-- Repeater element is optional --> <step>

<!--

Step will iterate n times --> <request> </request> <response> </response> </step> </repeater> </thread> </test>

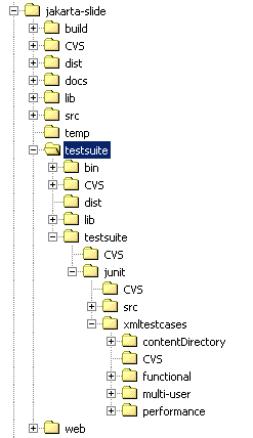

Directory Structure

The current directory structure is as follows:

TestSuite

The

testSuite folder contains all binaries, libraries, sources and test cases to

execute the standard WebDAV test suite. It contains a “build.xml” file for the

“ant” tool to compile and deploy the test suite. It contains following targets:

· All: This is the default, compiles the suite and prepares a deployable zipped file. (It calls the targets make and dist).

· make: compiles all Java sources.

· dist: Creates a deployable zipped file.

TestSuite/bin

The

testSuite folder contains the T-Processor script and some helping shell scripts

(currently for Windows only).

·

TPROCESSOR.cmd

Usage:

tprocessor [options]

Options:

-davhost <webdavserver_host> (default: localhost)

-davport <webdavserver_port> (default: 8080)

-davname <webdavserver_name> (default: slide)

-store <store_to_operate_on> (default: files)

-users <number_of_users> (default: 10)

-iterations <number_of_iterations>

(default: 10)

-testcase <xmltestcase_path> (relative to the home directory)

-pattern <xmltestcase_pattern> (wildard is *, file separator is \\)

If

both, -testcase and -pattern are omitted, all testcases are executed.

Examples:

(1) Execute all testcases

tprocessor

(2) Execute one specific testcase

tprocessor -testcase

\testsuite\junit\xmltestcases\copy\code\copy201.xml

(3) Execute all XML testcases which start

with 'copy'

tprocessor -pattern *\\copy*.xml

(4) Execute all XML testcases which are

bellow \testsuite\junit\xmltestcases\copy

tprocessor -pattern *\\copy\\*.xml

·

TErrorsReport.cmd

Usage:

terrorsreport [options]

Options:

-infile <testsuite_result_xml> (default:

..\testsuite\junit\testcasesresults.xml)

-outfile <errors_report> (default:

..\testsuite\junit\errors.txt; also

allowed: stdout, stderr)

TestSuite/testSuite

Contains

the sources and the WebDAV test cases.

TestSuite/testSuite/src

Contains all the sources needed to execute

the test suite (mainly T-Processor)

TestSuite/testSuite/xmlTestCases

This folder contains all the XML test cases in nested self-descriptive folders. For example, ‘Copy’ contains all the XML test cases that test the WebDAV Copy command. This directory is structured mainly into 4 sub-directories:

· Functional: The test cases for the functional tests for a class 2 WebDAV server.

· Multi-user: Multi user tests with a simulated load (per default 10 Users).

· Performance: Performance tests (.e.g. a Put is tested with different content size).

· ContentDirectory: This folder contains all resource files that are used within the test suite. The Put and Get commands all use a file reference to point to the location of these resources. A Put command will ‘Put’ one of these files to the server. Therefore, if the files in here are changed but the names kept the same, all test programs will run using these new resources. This means it is possible to run the test suite with large files, simply by replacing the current files with larger equivalents. For example, to use a larger HTML file, copy the new resource into this directory and give it the name of the already existing HTML file.

T-Processor

General Description

The T-Processor is at the heart of the XML Test Suite. T-Processor takes an XML test case as an argument and parses it to ensure it is valid. The client request is extracted and passed to the server. The server’s response is picked up by T-Processor and compared with the expected server response contained in the XML test document. T-Processor will output to the console window, or to an XML file, all executed steps. This details the method, the URL, the result, and the time taken for the step. The result of the entire test is shown at the end. The overall result, cumulative time, number of steps executed and the number of errors are all shown.

Example of T-Processor Output

Starting test case: C:\test.xml <!-- Name of

test case with full path.-->

<testCase>

<fileName>test.xml </fileName> <!-- Name of test

case. -->

<exceuteStep> <!-- This test case has only one step.

-->

<method>PUT</method>

<!-- It puts a file, -->

<url> server/test.html </url> <!-- To this

location. --> <result>Success</result>

<!-- The T-Processor receives the response code from the server and

compares it against the one defined in the XML test case. If they match,

‘Success’ is returned. -->

<time>100</time> <!-- Total time taken for step.

-->

</exceuteStep>

<result>Success</result> <!-- The overall result

was a success. -->

<time>120</time> <!-- How long the entire test

took. --> <testElementCount>1</testElementCount>

<!-- How many steps were executed. --> <testElementErrors>0</testElementErrors>

<!-- How many errors occurred. -->

</testCase>

JUNIT

Following class can be used in conjunction with the “Junit” tool:

- org.apache.slide.testsuite.testtools.walker.TprocessorTestExecuter

This class will execute the complete WebDAV test suite.

DTD

The DTD defines how each XML test case must be structured. It declares the order in which the elements must appear within the test case. The DTD is located at: Jakarta-slide/testsuite/testsuite/JUNIT/Tprocessor.dtd. It looks as follows:

<?xml

version='1.0' encoding='UTF-8' ?> <!--Generated

by XML Authority--> <!ELEMENT

test (specification? , (step | repeater | thread)+ , cleanup? )> <!ELEMENT

cleanup (step | repeater)+> <!ELEMENT

thread (step | repeater)+> <!ELEMENT

repeater (step | repeater | thread)+> <!ATTLIST

repeater repeatCount CDATA

'1' varDefinition

CDATA '' varUsage

CDATA '' > <!ELEMENT

assign (#PCDATA)> <!ATTLIST

assign varDefinition CDATA '' varUsage CDATA '' > <!ELEMENT

step (assign*, user? , password? , request , response)> <!ATTLIST

step executeIn CDATA '' > <!ELEMENT

request (command , header* , body?)> <!ELEMENT

response (command , header* , body?)> <!ELEMENT

specification (abstract+ , pre-Requisite? , description+ , expectedResult+

, exception?)> <!ELEMENT

header (#PCDATA)> <!ATTLIST

header varDefinition CDATA '' varUsage CDATA '' > <!ELEMENT

command (#PCDATA)> <!ATTLIST

command varDefinition CDATA '' varUsage CDATA '' > <!ELEMENT

body (#PCDATA)> <!ATTLIST

body varDefinition CDATA '' varUsage CDATA '' fileReference CDATA '' > <!ELEMENT

abstract (#PCDATA)> <!ELEMENT

pre-Requisite (#PCDATA)> <!ELEMENT

description (#PCDATA)> <!ELEMENT

expectedResult (#PCDATA)> <!ELEMENT

exception (#PCDATA)> <!ELEMENT

user (#PCDATA)> <!ATTLIST

user varDefinition CDATA '' varUsage CDATA '' > <!ELEMENT

password (#PCDATA)> <!ATTLIST

password varDefinition CDATA '' varUsage

CDATA '' >

Diagrams Of DTD

KEY:

REQUIRED

OPTIONAL

Arrows indicate which element may be used after its closure.

Bold parts in the XML layouts indicate the sections that have just been shown in the corresponding diagram.

Diagram of Test Element

XML Layout of Test Element

<test>

<Specification>

</Specification>

<Step

or Repeater or Thread>

</Step

or Repeater or Thread>

<Cleanup>

</Cleanup>

</test>

Diagram of Specification Element

XML Layout of Specification

<test>

<Specification>

<Abstract></Abstract>

<Pre-Requisite></Pre-Requisite>

<Description></Description>

<Expected

Result></Expected Result>

<Exception></Exception>

</Specification>

<Step or Repeater or Thread>

</Step or Repeater or Thread>

<Cleanup>

</Cleanup>

</test>

Diagram of Step Element

XML Layout of Step

<test>

<Specification>

<Abstract></Abstract>

<Pre-Requisite></Pre-Requisite>

<Description></Description>

<Expected Result></Expected Result>

<Exception></Exception>

</Specification>

<Step>

<assign></assign>

<user></user>

<password></password>

<request></request>

<response></response>

</Step>

<Cleanup>

</Cleanup>

</test>

Diagram of Repeater Element

XML Layout of Repeater

<test>

<Specification>

<Abstract></Abstract>

<Pre-Requisite></Pre-Requisite>

<Description></Description>

<Expected Result></Expected Result>

<Exception></Exception>

</Specification>

<Repeater>

<step></step>

OR

<repeater></repeater>

OR

<thread></thread>

</Repeater>

<Cleanup>

</Cleanup>

</test>

Diagram of Thread Element

XML Layout of Thread

<test>

<Specification>

<Abstract></Abstract>

<Pre-Requisite></Pre-Requisite>

<Description></Description>

<Expected Result></Expected Result>

<Exception></Exception>

</Specification>

<Thread>

<step></step>

OR

<repeater></repeater>

</Thread>

<Cleanup>

</Cleanup>

</test>

Diagram of Cleanup Element

XML Layout of Cleanup

XML Layout of Cleanup

<test>

<Specification>

<Abstract></Abstract>

<Pre-Requisite></Pre-Requisite>

<Description></Description>

<Expected

Result></Expected Result>

<Exception></Exception>

</Specification>

<Step>

<assign></assign>

<user></user>

<password></password>

<request></request>

<response></response>

</Step>

OR

<Thread>

<step></step>

OR

<repeater></repeater>

</Thread>

OR

<Repeater>

<step></step>

OR

<repeater></repeater>

OR

<thread></thread>

</Repeater>

<Cleanup>

<step></step>

OR

<repeater></repeater>

</Cleanup>

</test>

Diagram of Request Element

Diagram of Request Element

XML Layout of Request

<test>

<Specification>

<Abstract></Abstract>

<Pre-Requisite></Pre-Requisite>

<Description></Description>

<Expected Result></Expected Result>

<Exception></Exception>

</Specification>

<Step>

<assign></assign>

<user></user>

<password></password>

<request>

<command></command>

<header></header>

<body></body>

</request>

<response>

</response>

</Step>

<Cleanup>

</Cleanup>

</test>

Diagram of Response Element

Diagram of Response Element

XML Layout of Response

![]()

<test>

<Specification>

<Abstract></Abstract>

<Pre-Requisite></Pre-Requisite>

<Description></Description>

<Expected Result></Expected Result>

<Exception></Exception>

</Specification>

<Step>

<assign></assign>

<user></user>

<password></password>

<request>

<command></command>

<header></header>

<body></body>

</request>

<response>

<command></command>

<header></header>

<body></body>

</response>

</Step>

<Cleanup>

</Cleanup>

</test>

TProcessor.xml

As explained earlier, when TProcessor receives a response from the server, it is compared against the expected response defined within the XML test case. By using the TProcessor.xml file, it is possible to exclude some of the returned headers from being compared. For example, the last modified header that is returned will be different each time the test case is run. It would be impossible to define an expected value for this element. Therefore, TProcessor is configured to exclude it. No matter what the response is for “last modified” the test will pass.

The TProcessor.xml document also allows for exclusion of XML elements. For example, PropFind response elements.

TestCasesResults.xml

When a JUNIT test is run, the results are output to a console window and also to an XML file. The XML file is located at: Jakarta-slide/testsuite/testsuite/junit/testcasesresults.xml. It contains the name of each test case that has been run and the details of each step executed within it. It also indicates whether the step has been successful. If the step has failed, TProcessor will indicate both what was expected and what was actually received.

<?xml version="1.0" encoding="UTF-8"?> <!DOCTYPE testSuite SYSTEM "testCasesResults.dtd"> <testSuite>

<excecutionDate>DD.MM.YY HH:MM</excecutionDate>

<resultFileName>testCasesResults.xml</resultFileName> <testCase>

<fileName>test.xml</fileName> <!-- Name of test

case. --> <exceuteStep>

<method>PUT</method>

<url>/server/files/test.html</url>

<result>Success</result>

<time>100</time> </exceuteStep>

<result>Success</result>

<time>100</time>

<testElementCount>5</testElementCount>

<testElementErrors>0</testElementErrors> </testCase>

Language

The syntax for T-Processor is very simple. The current test cases and DTD example above should give you a rough idea as to the layout and syntax.

However, there are a few parts that need to be explained.

NOTE: Some of these code examples are not complete, XML definitions, DTD definitions, specification, header and body for request, response and cleanup have been left out for ease of explanation.

Variables

Variables are used to store and carry information from one step to another (E.g. a lock token) or to hold values throughout the test case (E.g. control of parallel threads). Variables can be used or declared within a step, or passed into the test suite via the Java command line.

Before an already existing variable can be used its name must be specified within the element that requires the variable:

<command

varUsage=”varName”>………</command The variable varName is being

declared for use within the command attribute. This is done with the

varUsage command.

The variable varName can now be accessed within the attribute:

<command

varUsage=”varName”>

%varName% </command> <!-- Note the use of

“%” around the varName showing that the value of the variable is used.

-->

It is possible to define new “user defined” variables via the “assign” XML

element:

<assign varDefinition = “varName“> initialvalue

</assign> <!--

A new variable has been created with the value of “initialvalue”. -->

These user variables are explained more below.

There are four type of variables used by Tprocessor:

1. User Defined;

2. Global Variables;

3. Repeat Variables;

4. Automated Variables.

User Defined

A user-defined variable can be declared and assigned a value within a step. The following piece of code defines and uses a variable called “aVar”.

<assign varDefinition = “aVar”> initialValue</assign> <!-- A variable

called “aVar” is declared and assigned the string “initialValue” --> <assign varUsage = “aVar”, varDefinition=”aVar”> %aVar% - -

Next</assign> <!-- varUsage used to declare that variable is to be used with

this element. To use the variable, it must be enclosed by “%”. -->

After the execution of the above statements the value of “aVar” would be “initialValue - - Next”. It should be noted that before “aVar” can be used (with the syntax “%aVar%”), its use must be declared in the tag of the element wishing to use the variable.

Global Variables

All Global Variables are initialized during TProcessor start-up. They are assigned a value when TProcessor is called on the Java command line. The following code is used to assign a global variable.

-Dxdav.globalVariable<nameOfGlobalVariable>=<value> <!-- For example, -Dxdav.globalVariableServerName=slide

-->

The global variable can then be used as follows:

<element

varUsage="globalVariable<name>">..%globalVariable<name>%..</element>

<!--

For example, <element

varUsage=”globalVariableServerName” > %globalVariableServerName%

<element> -->

There are five global variables used in the WebDAV test suite. globalVariableServerName, globalVariableCollection, globalVariableUsers, globalVariableIterationCount, and globalVariableIterationCountSmall.

GlobalVariableServerName is passed in by the T-Processor script. This is the name of your server that the command will be carried out upon. For example, if “slide” is passed in, a Put command would put to the location http://localhost/slide/…..

GlobalVariableCollection is passed in by the T-Processor script. This is the collection on the server that the XML tests use as a root directory.

For example, if ‘files’ is passed

in, ‘files’ will be used as root and all Mkcols, Puts ETC will be derived from

this. EG, http://localhost/slide/files/

So to use the two together the entire statement looks as follows:

<command varUsage="

globalVariableServer,globalVariableCollection "> <!-- Defines

that globalVariableCollection and

globalVariableServer are used within this command. --> MKCOL /%globalVariableServer%/%globalVariableCollection%/test

HTTP/1.1 <!--

Using the example above, assuming ‘slide’ has been passed in as

globalVariableServer and ‘files’ has been passed in as

globalVariableCollection, this command will resolve to: MKCOL

/slide/files/test/. A collection test is created at the location

slide/files. --> </command> <!-- Command closed -->

GlobalVariableUsers is passed in by the T-Processor script. This is the amount of parallel users that the tester wishes to simulate using the server.

The variable is used in conjunction with the repeat counter to determine how many user threads are opened. Most of the multi-user test cases, within the XML test suite, use this variable to control how many threads are generated. Therefore, it is possible to run the test suite with as many simulated users as required. For example, with the code below, if ‘5’ is passed in as ‘globalVariableUsers’, 5 threads are opened simulating 5 users. They will all then try to put at the same time. If ‘100’ is passed in, 100 users will try to put at the same time.

Another example is shown in the Repeat Counter section.

<repeater

varUsage="globalVariableUsers"

repeatCount="%globalVariableUsers%"> <!--

GlobalVariableUsers is declared as being used. The value of it is then

assigned to the repeat counter.--> <thread> <!--

Threads equal to the repeatCount, which has the same value as

globalVariableUsers, are opened.

--> <step> <command PUT ……> .. </command> </step> </thread> </repeater>

GlobalVariableIterationCount is passed in by the Tprocessor script. This can be used to control repeat counters. Within the test suite there are tests that repeat an individual step, sometimes within a thread. This iteration is controlled by the value of GlobalVariableIterationCount. An explanation of the repeater is given later. Most of the Multi User test cases within the XML test suite use repeat counters controlled by GlobalVariableIterationCount. This means that it is possible to have greater control of the test cases. It is possible to test a server by only passing in ‘5’ as an iteration count. Steps using the variable will only be iterated 5 times. This would mean, in the example below, that “globalVaribleUsers” attempt to Put 5 times. However, it would also be possible to put a high load onto a server by setting this variable to 100. This would mean, in the example below, that “globalVaribleUsers” attempt to Put 100 times.

<repeater

varUsage="globalVariableUsers"

repeatCount="%globalVariableUsers%"> <thread> <repeater varUsage="globalVariableIterationCount"

repeatCount="%globalVariableIterationCount%"> <step> <!-- The step is

iterated an amount of times equal to %GlobalVariableIterationCount%. --> ….. </step> </repeater> </thread> </repeater>

GlobalVariableIterationCountSmall is passed in by the Tprocessor script. This has a similar function to the GlobalVariableIterationCount. Within the XML Test Suite, it is used when repeating copy and move commands. These test programs normally copy or move from a-b then b-a. These two steps only need to iterate half as many times to create the same amount of load as other tests. For example, if 10 is passed in as GlobalVariableIterationCountSmall, the code below will cause the 2 copy commands to be executed 10 times by %globalVariableUsers%. This means that each user sends 20 separate copy commands to the server.

<repeater

varUsage="globalVariableUsers"

repeatCount="%globalVariableUsers%"> <thread> <repeater

varUsage="globalVariableIterationCountSmall"

repeatCount="%globalVariableIterationCountSmall%"> <!--

GlobalVariableIterationCountSmall is declared as being used. The value of

it is then assigned to the repeat counter. --> <step> <!-- The step is

iterated an amount of times equal to %GlobalVariableIterationCountSmall%.

--> <command>Copy

/server/files/a/test.html <destination>server/files/b/test.html </step>

<step> <command>Copy

/server/files/b/test.html <destination>server/files/a/test.html </step> </repeater> </thread> </repeater>

Repeat Variables

A repeat variable is defined within the repeat element. At each iteration, the variable is assigned the current value of the repeat counter (repeatCount). The repeatCount starts at 1 and increments by 1 after each iteration.

<repeater

varDefinition="i" repeatCount=”10”> <!-- The variable “i” will run alongside the

repeatCount holding the same value. It will be incremented, by one, at the

same time.--> <step> <!-- The step is

iterated 10 times. --> <command>PUT

/server/files/test/%I%.html</command> <!-- “i” will increment along with the

repeat counter meaning this statement will Put files 1.html - 10.html

inclusive.--> </step> </repeater>

Automated Variables

Currently there is only one type of automatic variable defined. This is used to store the value of a lock token. When a successful lock is issued, a new automatic variable will be created with the name “automaticVariable<n>”, where <n> is the current number of variables already defined plus one. For example, the first lock command would result in the generation of a variable named “automaticVariable1”, the next lock command will use “automaticVariable2” and so on. It should be noted that at each repeat iteration, the automated variables created in the previous iteration are removed again.

Syntax

XML Declaration

At the start of each test case, it must be declared that the file is XML. This should be defined with the following line:

<?xml

version="1.0" encoding="UTF-8"?>

|

<?xml

version="1.0" encoding="UTF-8"?> |

DTD

Following the XML declaration, a DTD needs to be defined at the beginning of each test case. This allows TProcessor to validate the test to ensure it is valid XML. A DTD is defined as follows:

<!DOCTYPE test SYSTEM

"c:\Tprocessor.dtd">

With the XML test suite the DTD is located at:

Jakarta-slide/testsuite/testsuite/JUNIT/Tprocessor.dtd. All test cases reference this. It is possible to use relative paths to point to the DTD. All test cases within the XML test suite use a relative path. Therefore, the DTD should not be moved.

Thread

The thread command allows simulation of several commands being issued to the server at once. The steps specified in the first thread are executed in parallel to those specified in the second thread. Within each thread, the steps are executed in the same order as specified in the test case. Thread tags can be placed around a step or a repeater.

<thread> <!-- Thread A -->

<step> <!--

Step 1 -->

…

</step>

<step> <!--

Step 2 -->

….

</step>

.

.

.

<step> <!--

Step n -->

….

</step>

</thread>

<thread> <!-- Thread B -->

<step> <!--

Step 1 -->

…

</step>

<step> <!--

Step 2 -->

….

</step>

.

.

.

<step>

<! Step n -->

….

</step>

</thread>

<!--Both thread A and B are

sent to the server in parallel. Step 1 in thread A will be executed at the

same time as Step 1 in thread B. Step 2 in thread A is then executed at the

same time as Step 2 in thread B. This continues upto Step n in threads A

and B.-->

|

<thread> <!-- Thread A --> <step> <!--

Step 1 --> … </step> <step> <!--

Step 2 --> …. </step> . . . <step> <!--

Step n --> …. </step> </thread> <thread> <!-- Thread B --> <step> <!--

Step 1 --> … </step> <step> <!--

Step 2 --> …. </step> . . . <step> <! Step n --> …. </step> </thread> <!--Both thread A and B are sent to the server in parallel. Step 1 in thread A will be executed at the same time as Step 1 in thread B. Step 2 in thread A is then executed at the same time as Step 2 in thread B. This continues upto Step n in threads A and B.--> |

Because the thread is normally used with the repeater, it is explained fully below.

Repeater

The repeater allows steps to be iterated. Anything within repeater tags will be executed the specified number of times. The repeater can be placed around a step, a thread or, to allow for nesting, a repeater.

NOTE: In the following examples some of the exact coding has been omitted and replaced with ‘….’. This is for ease of explanation.

For example, to loop a series of steps ‘n’ times, the following code would be used.

Example 1 <repeater repeatCount="n"> <!--

repeat n times. Change this value to the number of iterations required.

Everything within the repeater tags will iterate n times. --> <step>….</step> <step>….</step> . . <step>….</step> <step>….</step> </repeater>

The repeater can be linked to the global variable “GlobalVariableIterationCount”. This causes all commands within the repeat tags to iterate to the value of “GlobalVariableIterationCount”.

For example to loop a step “GlobalVariableIterationCount” times the following piece of code would be used:

Example 2 <repeater

varUsage="globalVariableIterationCount"

repeatCount="%globalVariableIterationCount%"> <!-- This declares that the command uses

globalVariableCount and assigns the value to the repeatCount. Note the use

of % around the variable when it is being used. --> <step> … </step> </repeater>

The repeater can be linked to the thread command allowing simulation of multiple users sending requests to the server.

For example, to allow ten users to simultaneously create a collection, the following piece of code would be used. In this example, 10 Threads are opened. Each thread then executes, in parallel, the steps specified, in the same order as they appear within the test case.

Example 3 <repeater repeatCount="10"> <thread> <step> <command MKCOL.....>

- This command is threaded 10 times simulating 10 users all issuing a mkcol

command at once. </step> </thread> </repeater>

More than one step can be placed within a repeater:

Example 4 <repeater repeatCount="5"> <thread> <step> <command

MKCOL.....> - This command is threaded 5 times simulating 5 users

all issuing a mkcol command at once. </step> <step> <command PUT.....>

- This command is threaded 5 times simulating 5 users all issuing a put

command at once. </step> </thread> </repeater>

Many threads can be used, allowing for the simulation of many users issuing many commands.

For example, 1 user could carry out a MKCOL whilst another Puts a resource.

Example 5 <thread> <step> <command MKCOL.....> </step> </thread> <thread> <step> <command PUT.....> </step> </thread> <!--

Both commands are threaded meaning one user performs a MKCOL whilst another

PUTs. -->

As well as simulating n users issuing a command once (Example 4 and 5), it is also possible to simulate n users carrying out a command n times.

For example, this piece of code simulates 5 users doing 10 Puts. The Put commands are threaded, so it would be correct to think of this test case as showing 5 users all putting at the same time, then repeating 10 times.

<repeater repeatCount="5" > <thread> <repeater

repeatCount="10"> <step> <request>

<command>PUT…</command> </request> </step> </repeater> </thread> </repeater>

The only limitation with the above pieces of code is that as only one Put command is issued, only one URL can be specified. This, in itself, is a useful test; many users issuing commands to the same resource. If n users are performing a put on to the same resource, either a response code of “200 OK” or “409 Conflict” is normally expected. To pick up multiple response codes, all expected codes should be enclosed in brackets, separated by a comma, with no whitespace.

<response> <command>HTTP/1.0 (200,409) OK</command> <!--A 200 OK and 409

are expected. If either of these response codes are received, they will be

passed. -->

However, sometimes it is useful to test a server by simulating many users issuing commands to separate resources. If this is required, separate collections or resources need to be created and then the commands issued against those.

<test> <step> <command>

MKCOL /server/test/new HTTP/1.1</command> …. </step> <!-- Because so

many files are going to exist at the end, it is easier to have one

containing collection that can be deleted at cleanup, rather than having

to use a repeater and delete each individual file. --> <repeater

varUsage="globalVariableUsers"

varDefinition="userNumber" repeatCount= "%globalVariableUsers%"> <!-- Declarations and setting of

variables for repeater. GlobalVariableUsers is declared as being

used. The value is then assigned

to repeatCount. -->

<step>

<request> <command

varUsage="userNumber”> MKCOL /server/test/new/user%userNumber%

HTTP/1.1</command> <!-- The variable userNumber is

incremented with each repeat loop. It is set to 1 to start with and will

create /user1, /user2, /user3 and so on until the repeater stops. --> ….

</step> </repeater> <repeater

varUsage="globalVariableUsers"

varDefinition="userNumber"

repeatCount="%globalVariableUsers%">

<thread>

<repeater repeatCount="50">

<step> <request> <command

varUsage="userNumber">PUT /server/test/new/user%userNumber%/file.html

HTTP/1.1</command> <!-- %userNumber% is resolved to

point to the users individual collections. --> …..

</step>

</repeater>

</thread> </repeater> <!-- %globalVariableUsers%

number of threads are created, one for each user. The Put command is

issued 50 times by each user. Care should be taken with the placing of the

thread tags. If not, in the case of this test, User 1 would put 50 times,

then user 2, then user 3 and so on. --> <cleanup> <step> <command>

DELETE /server/test/new HTTP/1.1</command> ….. <step> </cleanup> </test>

Step

A step is essentially a WebDAV command. It is made up of 2 parts, a request and a response. The ‘request’ is the part picked up by T-Processor and sent to the server. Within the request is a command (containing the WebDAV command and URI) and several headers. The response is the part compared with the actual received response from the server. Within the response is a command (containing the expected response code) and several headers. The structure of a step is exactly the same as the corresponding WebDAV request. The only difference being that variables may be used and certain headers can be left out.

For example, the following code would send a Put command to the server.

<step> <request> <command>PUT

/server/test/put.html HTTP/1.1</command> <!-- The Command

contains: The command to be sent, then the URL for the command to be

carried out upon. --> <header>Translate:

f</header> <header>Connection:

Keep-Alive</header> <!--All standard headers

required for a put command. --> <body> <!--

The entire HTML document is entered. --> </body> </request> <response> <!-- This

response must match the received response to result in a successful test

--> <command>HTTP/1.0

200 OK</command> <!--This is the major part that T-Processor checks

for a match. The Response Code. --> <header>Date: </header> <!-- The date header can contain any date.

T-Processor does not check for an exact match. --> <header>Content-Language:

en</header> </response> </step>

The requests and response headers within the steps are unique to each command. Most are self explanatory but a few need explaining.

Put

A put command requires the document that is to be ‘put’, declared within the body.

<step>

<request>

<command>PUT /server/test/resource1.html

HTTP/1.1</command>

<header>Translate: f</header>

<header>Connection: Keep-Alive</header>

<body><![CDATA[<html><head></head><body><FONT

SIZE="+1">This is a Test html

Document</FONT></br></body></html>]]></body>

<!-- This is the actual HTML document that will be uploaded.

-->

</request>

<response>

<command>HTTP/1.0 200 OK</command>

<header>Date: </header>

<header>Content-Language: en</header>

</response> </step>

For example, this command puts an HTML document.

Sometimes it is required for large documents to be put onto the server. It is impractical to include an HTML document of a large size into the body of a put statement. To assist with this, it is possible to define a path within the put statement, pointing to the location of the file to be ‘put’. To achieve this, a “fileReference” is defined within the body of the request and given the path of the file. For example, this piece of code would put the document test.html stored in C:\testprograms to the location specified.

<step>

<request>

<command>PUT /server/test/test.html HTTP/1.1</command>

<header>Accept-Language: en-us</header>

<header>Translate: f</header>

<header>User-Agent: Microsoft Data Access Internet Publishing

Provider

DAV</header>

<header>Connection: Keep-Alive</header> <body

fileReference="C:\testprograms\test.html "></body> <!--

The file at the

fileReference location will be uploaded to the server. Any data in the body

tags will be ignored. -->

</request>

<response>

<command>HTTP/1.0 200 OK</command>

<header>Content-Language: en</header>

</response> </step>

Using this option, it is possible to upload any file including image and audio files.

All test cases within the XML test suite currently use this upload option with the Put and Get commands. The individual files used are stored in the ContentDirectory. This option enables the test suite to be more flexible and powerful. It means that the entire test suite can be run with large or small files. All that is needed is for the files in this directory to be changed. As long as the name and location remains the same, the new resources will be used. It is also possible to use a relative path to specify the location of the resource that will be ‘put’. All test cases within the XML test suite use relative paths, therefore the contentDirectory folder, or its contents, should not be moved. Otherwise the test cases will not function correctly.

Get

Within the body of the Get response statement, the expected content is defined. TProcessor compares this against the body received from the server, to check that the resource has been correctly returned.

<step> <request>

<command>GET /server/test/file.html HTTP/1.1</command> <!-- The location of

the file that is being sought. --> <header>Accept: */*</header> <header>Accept-Encoding: gzip,

deflate</header> <header>Connection: Keep-Alive</header> </request> <response> <command>HTTP/1.0 200 OK</command> <header>Content-Type:

text/html</header> <body> <![CDATA[<html><head></head><body><FONT

SIZE="+1">This is a Test html

Document</FONT></br></body></html>]]> <!-- The exact html that is

expected back is defined within the body. --> </body> </response> </step>

Instead of hard coding the expected response, it is possible to enter the location of a file that TProcessor will compare the received body against.

<step> <request>

<command>GET /server/test/file.html HTTP/1.1</command> <!-- The location of

the file that is being sought. --> <header>Accept:

*/*</header> <header>Accept-Encoding: gzip,

deflate</header> <header>Connection: Keep-Alive</header> </request> <response> <command>HTTP/1.0 200 OK</command> <header>Content-Type:

text/html</header> <body fileReference="C:\test.html">

<!-- The location of the file that will be

compared against the received response from the server. Any text within the

body tags is ignored, the file always takes priority. -->

</body> </response> </step>

All Gets within the XML Test Suite use this file reference. This means that the test suite can be more powerful as it is possible to easily change the content of these resources. All the files used by the file reference commands are located in the ContentDirectory folder. If any new resource is placed in this directory and given the name of an already existing one, this new resource will be used in its place. All test cases using the previous file will then automatically use this new resource for PUTS and GETS.

It also means the test cases are kept as small as possible. For example, if 50 files are to be put at different locations, 50 separate put statements are needed. Without file references, the file to be put would need to be hard coded into the body of each put command. If the same file is being put each time, this means a lot of replicated code. Using a file reference, only one line is needed in each statement to put the same resource. It is also possible to use relative paths to reference the file. All test cases within the XML test suite use file references. Therefore it is important that the contentDirectory folder, or the files within it, is not moved. Otherwise the tests will not function correctly.

Lock/Unlock

When a lock is placed upon a resource, a lock token is issued. This is a unique key that must be sent again to unlock the resource.

This lock token is stored by the T-Processor in an automated variable. The variable is used again in the unlock command.

<step> <request> <command>LOCK

/server/test/file.html HTTP/1.1</command> <!-- Command

to lock resource at specified URI. --> <header>Connection: Keep-Alive,

TE</header> <header>TE: trailers, deflate, gzip,

compress</header> <header>Timeout: Second-604800</header> <header>Accept-Encoding: deflate, gzip,

x-gzip, compress, x-compress</header> <header>Content-type:

text/html</header> <!--

All standard headers needed for lock. --> <body><![CDATA[<?xml

version="1.0"?><A:lockinfo

xmlns:A="DAV:"><A:locktype><A:write/></A:locktype><A:lockscope><A:exclusive/></A:lockscope><A:owner><A:href></A:href></A:owner></A:lockinfo>]]></body>

<!--

The contents of the lockscope tags, in this case ‘exclusive’, allow control

of the scope of the lock. --> </request> <response> <command>HTTP/1.0 200

OK</command> <!-- Response code expected as a result of a lock request.

--> <body

varUsage="globalVariableCollection"> <![CDATA[<?xml version="1.0"

encoding="utf-8" ?><d:prop

xmlns:d="DAV:"><d:lockdiscovery><d:activelock><d:locktype><d:write/>

</d:locktype><d:lockscope><d:exclusive/></d:lockscope><d:depth>Infinity

</d:depth><d:owner></d:owner><d:timeout>Second604800</d:timeout><d:locktoken> <d:href> <!-- The returned lock token does not need to

be defined. It would be impossible to specify an expected value, as the

lock token is different each time. It is excluded from checking by

TProcessor.xml. Any value is passed and stored within the

automaticVariable. --> </d:href>

</d:locktoken></d:activelock></d:lockdiscovery> </d:prop>]]></body> </response> </step> <step> <request> <command >UNLOCK

/server/test/file.html HTTP/1.1</command> <header>Connection: Keep-Alive,

TE</header> <header>TE: trailers, deflate, gzip,

compress</header> <header

varUsage="automaticVariable1">Lock-Token: %varUsage%</header>

<!--During

the lock, the lock token has been stored in automaticVariable1. The usage

of this variable must be declared. It is then referred to by varUsage.

--> **** LOCK TOKEN NEEDS EXPLAINING* <header>Accept-Encoding: deflate,

gzip, x-gzip, compress, x-compress</header> <!-- All the others

headers are standard headers used in an Unlock request. --> </request> <response> <command>HTTP/1.0 204 No

Content</command> <!-- Response code expected as a result of the unlock

request. --> </response> </step>

As explained earlier, when a lock is placed upon a resource, the unique lock token that is returned is stored within an automatic variable. This automatic variable is then used within the unlock command to send the lock token back to the server.

Each time a new lock is created, the automaticVariable<n> is incremented by one and the lock token stored. In the example below, two files are locked and then unlocked.

<step> <request> <command>LOCK /server/test/file.html

HTTP/1.1</command> <header>Connection: Keep-Alive,

TE</header> <header>TE:

trailers, deflate, gzip, compress</header> <header>Timeout:

Second-604800</header> <header>Accept-Encoding:

deflate, gzip, x-gzip, compress, x-compress</header> <header>Content-type:

text/html</header> <body><![CDATA[<?xml

version="1.0"?><A:lockinfo

xmlns:A="DAV:"><A:locktype><A:write/></A:locktype><A:lockscope><A:exclusive/></A:lockscope><A:owner><A:href></A:href></A:owner></A:lockinfo>]]></body>

</request> <response> <command>HTTP/1.0 200 OK</command> <body

varUsage="globalVariableCollection"> <![CDATA[<?xml version="1.0"

encoding="utf-8" ?><d:prop

xmlns:d="DAV:"><d:lockdiscovery><d:activelock><d:locktype><d:write/>

</d:locktype><d:lockscope><d:exclusive/></d:lockscope><d:depth>Infinity

</d:depth><d:owner> </d:owner><d:timeout>Second604800</d:timeout><d:locktoken> <d:href></d:href>

</d:locktoken></d:activelock></d:lockdiscovery> </d:prop>]]></body> </response> </step> <step> <request> <command>LOCK

/server/test/file2.html HTTP/1.1</command <header>Connection: Keep-Alive,

TE</header> <header>TE:

trailers, deflate, gzip, compress</header> <header>Timeout:

Second-604800</header> <header>Accept-Encoding:

deflate, gzip, x-gzip, compress, x-compress</header> <header>Content-type: text/html</header>

<body><![CDATA[<?xml

version="1.0"?><A:lockinfo

xmlns:A="DAV:"><A:locktype><A:write/></A:locktype><A:lockscope><A:exclusive/></A:lockscope><A:owner><A:href></A:href></A:owner></A:lockinfo>]]></body>

</request> <response> <command>HTTP/1.0 200

OK</command> <body

varUsage="globalVariableCollection"> <![CDATA[<?xml version="1.0"

encoding="utf-8" ?><d:prop

xmlns:d="DAV:"><d:lockdiscovery><d:activelock><d:locktype><d:write/>

</d:locktype><d:lockscope><d:exclusive/></d:lockscope><d:depth>Infinity

</d:depth><d:owner> </d:owner><d:timeout>Second604800</d:timeout><d:locktoken> <d:href> </d:href>

</d:locktoken></d:activelock></d:lockdiscovery> </d:prop>]]></body> </response> </step>

<step> <request> <command >UNLOCK

/server/test/file.html HTTP/1.1</command> <header>Connection:

Keep-Alive, TE</header> <header>TE:

trailers, deflate, gzip, compress</header> <header

varUsage="automaticVariable1">Lock-Token:

%varUsage%</header> **** LOCK TOKEN NEEDS EXPLAINING* <header>Accept-Encoding: deflate,

gzip, x-gzip, compress, x-compress</header> </request> <response> <command>HTTP/1.0 204 No

Content</command> </response> </step> <step> <request> <command >UNLOCK

/server/test/file2.html HTTP/1.1</command> <header>Connection:

Keep-Alive, TE</header> <header>TE:

trailers, deflate, gzip, compress</header> <header

varUsage="automaticVariable2">Lock-Token:

%varUsage%</header> <!--Second lock token has been stored in

automaticVariable2. --> <header>Accept-Encoding: deflate,

gzip, x-gzip, compress, x-compress</header> </request> <response> <command>HTTP/1.0 204 No

Content</command> </response> </step>

The second lock stores its token in automaticVariable2.

If it is unclear which automaticVariable the lock token has been stored in and which automatic variable should be provided for the unlock, it is possible to see in the console window which variable is being used. For example the following line will accompany a lock request:

VAR setting automaticVariable1 = opaque lock

token......................

This shows that the lock token has been stored in automaticVariable1. It would then be possible to code the test case to use the correct automaticVariable to unlock.

When a lock has been placed upon a file, it should not be possible for anyone to write to this resource. However, if a lock token is provided, it proves that the user wishing to carry out the write is the owner of the lock and has the right to carry out the request. To simulate this, the lock token can be passed in with a request as a variable.

<step> <request> <command>LOCK /server/test/file.html

HTTP/1.1</command> <header>Connection: Keep-Alive,

TE</header> <header>TE:

trailers, deflate, gzip, compress</header> <header>Timeout:

Second-604800</header> <header>Accept-Encoding:

deflate, gzip, x-gzip, compress, x-compress</header> <header>Content-type:

text/html</header> <body><![CDATA[<?xml

version="1.0"?><A:lockinfo

xmlns:A="DAV:"><A:locktype><A:write/></A:locktype><A:lockscope><A:exclusive/></A:lockscope><A:owner><A:href></A:href></A:owner></A:lockinfo>]]></body>

</request> <response> <command>HTTP/1.0 200

OK</command> <body

varUsage="globalVariableCollection"> <![CDATA[<?xml version="1.0"

encoding="utf-8" ?><d:prop

xmlns:d="DAV:"><d:lockdiscovery><d:activelock><d:locktype><d:write/>

</d:locktype><d:lockscope><d:exclusive/></d:lockscope><d:depth>Infinity

</d:depth><d:owner></d:owner><d:timeout>Second604800</d:timeout><d:locktoken> <d:href></d:href>

</d:locktoken></d:activelock></d:lockdiscovery> </d:prop>]]></body> </response> </step> <step> <request> <command> DELETE

/server/test/file.html HTTP/1.1</command> <header>Destroy: NoUndelete</header> <header>Translate:

f</header> <header>Connection:

Keep-Alive</header>

<header>varUsage=”automaticVariable1”>If:

(<%varUsage%>)</header> <!-- Checks the lock

token supplied. If it is correct, the delete will be allowed. --> </request> <response> <command>HTTP/1.0 204 No

Content</command> <header>Content-Language:

en</header> </response> </step>

For example, this test case locks a resource, then tries to

delete it. Because the lock token is passed in, the user should be able to

delete successfully.

As the lock token supplied is the one that was issued when the resource was locked, the delete is accepted.

Copy and Move

The Copy and Move commands both need locations specified within a header.

<step>

<request>

<command>COPY or MOVE /server/test/file.html

HTTP/1.1</command>

<header>Destination: /server/test/new/file.html</header>

<!-- Location to be copied

or moved to. -->

<header>Overwrite: F</header>

<header>Translate: f</header>

<header>Connection: Keep-Alive</header>

</request>

<response>

<command>HTTP/1.0 201 Created</command> <!--

Expected Response. -->

<header>Date: </header> <header>Content-Language:

en</header>

</response> </step>

An interesting point to note is that if the Overwrite header is set to F, a response code 201 Created is received from the server. However, if the header is changed to T, meaning files will be overwritten, a response code of 204 No Content is returned.

MKCOL

The MKCOL command is used to create collections on the specified server.

<step> <request> <command>MKCOL

/server/test/new HTTP/1.1</command> <!-- A collection called

“new” is created at the URI /server/test. --> <header>Translate:

f</header> <header>Connection:

Keep-Alive</header> </request> <response> <command>HTTP/1.0

201 Created</command> <header>Date:

</header>

<header>Content-Language: en</header> </response> </step>

The above method is fine for most test cases. However, for some test cases, it is useful to create large directory structures quickly. To create a tree 100 collections deep would need 100 separate MKCOL commands. To avoid this, a variable can be used. This allows the user to specify the depth of the required tree. TProcessor will then loop through the MKCOL request, creating the nested tree to the required length. NOTE: Some headers have been left out for ease of explanation and reading.

<step> <assign

varDefinition="nestedPath"></assign> <!-- The variable

needs to be initialized. --> <request> <command>MKCOL /server/test/new HTTP/1.1</command> ….. </step> <repeater varUsage="globalVariableIterationCount"

repeatCount="10"> <!-- The tree will be 10 levels deep. --> <step> <assign

varUsage="nestedPath"

varDefinition="nestedPath">%nestedPath%/dir</assign>

<!--

The path will be added to at each iteration. It takes on the current path

and adds another collection to it. --> <request> <command varUsage="nestedPath">MKCOL

/server/test/new%nestedPath% HTTP/1.1</command> … </step> </repeater>

This will create a tree 10 levels deep. Eg:

Server/test/new/dir/dir/dir/dir/dir/dir/dir/dir/dir/dir.

PropFind

The property that is being sought can be defined in the header.

<step> <request> <command>PROPFIND /server/test/resource1.html

HTTP/1.1</command> <header>Content-Type: text/xml</header> <header>Translate: f</header> <header>Depth: 1</header> <header>Connection: Keep-Alive</header> <body><![CDATA[<?xml version="1.0"

?><propfind

xmlns="DAV:"><prop><displayname/></prop></propfind>]]> </body> <!-- ‘Displayname’ is

the property being requested. --> </request> <response> <command>HTTP/1.0 207

Multi-Status</command> <body><![CDATA[<?xml

version="1.0" encoding="utf-8" ?> <multistatus xmlns="DAV:"

xmlns:S="http://jakarta.apache.org/slide/"> <response>

<href>/server/test/resource1.html</href> <propstat> <prop>

<displayname>resource1.html</displayname>

</prop>

<status>HTTP/1.1 200 OK</status>

</propstat> </response> </multistatus>]]> </body> <!-- The body of a

propfind normally returns tags with entity references. This is because

T-Processor would see the tags as XML tags and would try to parse them. In

the example above it has been shown as CDATA to make it easier to

read.--> </response> </step>

When a 207 Multi-Status response code is returned, it means that there is additional information in the body that must be checked. This means that, when a 207 is expected, the body of the expected response must be declared within the XML document so that T-Processor can check the additional response codes and ensure the returned property values are as expected.

The normal structure for the response body is as above. The displayname tags contain the expected value of the displayname property. This is also the syntax for any individual user created properties. A PropFind on WebDAV properties, eg Etag, supportedlock ETC, each have their own body structure. For the exact syntax of the response body, please refer to the WebDAV standard. There are also examples of the response syntax within the XML test suite. These are located within the PropPatch/code folder. The tests perform a propPatch on a DAV property and then a PropFind on the same property. There is a test for each DAV property meaning there should also be a PropFind example for each DAV property.

Sometimes it is useful to be able to issue a PropFind that picks up whether a property exists or not. For example, there are test cases, within the XML test suite, that try to propPatch several properties. One of these requests is invalid and this should cause all following commands within the propPatch not to execute and all preceding ones to be rolled back. To achieve this, a propPatch is issued. One of the requests tries to delete a DAV property. This is not possible and should result in the request being refused, causing a rollback, canceling all changes. A PropFind is then issued. No properties should exist following the propPatch request. Therefore, the PropFind needs to pick up whether the property exists or not. Normally it would be possible to check the main response code for a 404 error. However, as a PropFind always returns a 207 Multi-Status, the body of this response must be checked. It contains a secondary response code; the actual result of the PropFind.

An example of this was shown in the test above. In it, a 207 Multi-Status response is expected from the server. Within this, the body is expected to contain a 200 OK response code indicating that the property has been found successfully.

<step> <request>

<!--

Command and headers left out for ease of explanation --> …. <body><![CDATA[<?xml

version="1.0" ?><propfind

xmlns="DAV:"><prop><test/></prop></propfind>]]></body> </request> <response> <command>HTTP/1.0

207 Multi-Status</command> ….. <body

varUsage="globalVariableCollection,userNumber"> <![CDATA[<?xml

version="1.0" encoding="utf-8" ?> <multistatus

xmlns="DAV:"

xmlns:S="http://jakarta.apache.org/slide/"> <response> <href>/server/test/resource1.html</href> <propstat> <prop> </prop> <status>HTTP/1.1

200 OK</status> </propstat> <propstat> <prop> <test/> </prop> <status>HTTP/1.1

404 Not Found</status> </propstat> - A second propstat

element is expected. This will contain the 404 Not Found response code. If

no property has been found, the step will pass. However, if the property

‘test’ is discovered, an error will be thrown. </response> </multistatus>]]> </body> </response> </step>

An example of the propPatch tests on WebDAV properties is

shown below. This is to help explain the main syntax for a PropFind expecting

the property to not exist. NOTE: Certain elements have been left out for ease

of explanation. These elements have already been explained above. I.E. The

exact syntax of the PropFind request.

When searching for certain properties the result can change from machine to machine, it may also differ with each PropFind. For example: a PropFind on an Etag. An Etag is unique to the machine that ran the test. Therefore the test cannot be coded with an expected Etag in the body of the PropFind response. One option is simply to leave out the expected body in the response. T-Processor will pass the test provided a 207 has been received and won’t even check the body. However, as explained above, sometimes it is necessary to check the extra response codes and body of the received response. In this case is possible to use a ‘*’ wildcard character. T-Processor will then pass any value that it receives for that element.

<step> <user

varUsage="userNumber">user%userNumber%</user> <password

varUsage="userNumber">user%userNumber%</password>

<request> <command

varUsage="globalVariableCollection,userNumber">PROPFIND

/server/test/resource1.html HTTP/1.1</command> <header>Accept-Language: en-us</header> <header>Content-Type: text/xml</header> <header>Translate: f</header> <header>Depth: 1</header> <header>Connection:

Keep-Alive</header> <body><![CDATA[<?xml

version="1.0" ?><propfind xmlns="DAV:"><prop><getetag/></prop></propfind>]]></body>

<!-- PropFind request on the Etag

property. -->

</request>

<response> <command>HTTP/1.0 207 Multi-Status</command> <body

varUsage="globalVariableCollection,userNumber"> <![CDATA[<?xml

version="1.0" encoding="utf-8" ?><multistatus

xmlns="DAV:"

xmlns:S="http://jakarta.apache.org/slide/"> <response> <href>/server/test/resource1.html</href> <propstat> <prop> <getetag>*</getetag>

<!-- The * indicates that ANY value returned as an Etag will be

accepted by T-Processor. --> </prop><status>HTTP/1.1

200 OK</status> </propstat> </response> </multistatus>]]> </body>

</response> </step>

This wildcard also works for text properties. This example shows a PropFind on displayname. The actual displayname is not of interest, just the fact that one exists. NOTE: Just the response is shown here.

<response> <command>HTTP/1.0 207

Multi-Status</command> <body

varUsage="globalVariableCollection,userNumber"><![CDATA[<?xml

version="1.0" encoding="utf-8" ?> <multistatus xmlns="DAV:"

xmlns:S="http://jakarta.apache.org/slide/">

<response>

<href>/server/test/resource1.html</href>

<propstat> <prop> <displayname>*</displayname>

<!-- The * indicates that ANY value returned as a displayname will

be accepted by T-Processor. -->

</prop>

<status>HTTP/1.1 200 OK</status>

</propstat>

</response> </multistatus>]]> </body> </response>

PropPatch

PropPatch commands require a header detailing the new property and value.

<step>

<request> <command>PROPPATCH

/server/test/file.html HTTP/1.1</command> <header>Content-Type:

text/xml</header> <header>Translate:

f</header> <header>Pragma:

no-cache</header> <header>Connection:

close</header> <body><![CDATA[<?xml

version="1.0" encoding="utf-8"?>

<D:propertyupdate xmlns:D="DAV:"> <D:set><D:prop>

<D:displayname>test</D:displayname></D:prop></D:set>

</D:propertyupdate>]]> <!-- This sets the property

displayname to “test”. --> </body>

</request>

<response> <command>HTTP/1.0 207

Multi-Status</command> <header>Date: </header> <header>Content-Language:

en</header> <body> <![CDATA[<?xml

version="1.0" encoding="utf-8" ?><d:multistatus

xmlns:d="DAV"><d:response><d:href>/test/file.html</d:href><d:propstat><d:prop><displayname/></d:prop>

<d:status>HTTP/1.1 200 OK</d:status></d:propstat></d:response>

</d:multistatus>]]> </body>

</response> </step>

If the property does not exist, it is automatically created and then set.

Cleanup

As a matter of better interoperability all test cases should create their own required resources and should not expect to find and use already existing collections, resources or lock tokens. Therefore, within the cleanup phase, the test cases should remove all created resources and lock tokens. Currently all test cases only have the following assumption:

1. That the URL is made up as follows: http://host:port/server/rootCollection.

2. This URL is valid and already exists.

All these Ids (Host, port, server, root collection) can be configured for TProcessor.

Any commands used to delete resources created during the test should be included within the cleanup. The cleanup tags are not mandatory; deletion can be done in a step. However, using cleanup makes for a better structure.

<cleanup>

<step> <request>

<command> DELETE /server/test/file.html

HTTP/1.1</command>

<header>Destroy: NoUndelete</header>

<header>Translate: f</header>

<header>Connection: Keep-Alive</header>

</request>

<response>

<command>HTTP/1.0 204 No Content</command>

<header>Content-Language: en</header>

</response>

</step> </cleanup> <!-- Without these tags the step would

carry out the same function. However, if there are many steps needed to

clean up remaining resources it will result in better-structured and more

readable code. -->

Combined

All the above syntax can be linked to make a very powerful test case.

<?xml version="1.0" encoding="UTF-8"?> <!-- Defining

the use of XML --> <!DOCTYPE …..dtd"> <!-- Location of DTD. --> <test> <specification> <abstract>A collection is created,

a file is put to the collection, the collection is then

deleted.</abstract> <pre-Requisite>Server must be

started and collection must be created and declared in T-Processor

script</pre-Requisite> <description>1) Collection created

2)File put 3)Collection deleted </description> <expectedResult>User should be able

to put a file. 200 OK expected. </expectedResult> </specification> <!-- Brief

explanation of the test case --> <step> <request> <command

varUsage="globalVariableCollection,globalVariableServerName">

MKCOL /%globalVariableServerName%/%globalVariableCollection%/test

HTTP/1.1</command> <!-- Use of globalVariableCollection and

globalVariableServerName. Replaced at runtime by values passed in by

T-Processor script. -->

<header>Translate: f</header>

<header>Connection: Keep-Alive</header> </request> <response>

<command>HTTP/1.0 201 Created</command> <!-- Response

code 201 expected back from

server

as a result of the MKCOL request.

-->

<header>Date: </header>

<header>Content-Language: en</header> </response> </step> <step>

<request> <command varUsage

="globalVariableCollection,globalVariableServerName">PUT /%globalVariableServerName%/%globalVariableCollection%/test/file.html

HTTP/1.1</command> <header>Translate: f</header>

<header>Connection: Keep-Alive</header> <body

fileReference="C:\test.html "> </body> </request> <response> <command>HTTP/1.0

200 OK</command> <!-- 200 OK Response code expected back from server after Put

command. --> <header>Date: </header> <header>Content-Language: en</header>

</response> </step>

For example, this code makes a collection, Puts a file to the

new collection and then deletes the collection.

<cleanup> <step> <request> <command

varUsage="globalVariableCollection,globalVariableServerName">

DELETE

/%globalVariableServerName%/%globalVariableCollection%/test

HTTP/1.1</command>

<header>Destroy: NoUndelete</header>

<header>Translate: f</header>

<header>Connection: Keep-Alive</header> </request> <response>

<command>HTTP/1.0 204 No Content</command> <!-- 204 No Content response code

expected back from server after Delete

command. --> <header>Date:

</header>

<header>Content-Language: en</header> </response> </step> </cleanup> <!-- The cleanup deletes the initial

collection meaning its contents, the html file, is deleted as well. --> </test>

To simulate multiple users; threads and repeaters are incorporated.

Within this test case, collections are firstly created to the value of %GlobalVariableUsers%. This amount of users then try to simultaneously put an HTML document to their own collection. This is repeated %GlobalVariableIterationCount% times. When the threads have all finished, the collection created by the first MKCOL is deleted. All created resources underneath are automatically deleted.